Elbit Systems is developing multi-sensor fusion and display system, integrated in a new ‘Intelligence Management Center’ (IMC), enabling users of unmanned systems to control missions involving multiple sensors. Elements of the new center have already been ordered by several customers, to enhance operational management of UAV assets, improve training and development of operational doctrine, and better integrate UAVs with other missions.

The IMC offers unique mission control capabilities, by providing the mission commander with presentation tools, that dynamically access data from multiple sources, formatted, rectified, fused and correlated to present sensor feeds within an integrated situational picture. The system is designed for operation by a single operator, drawing situational awareness and take tactical decision based on all the relevant sources and assets under his control.

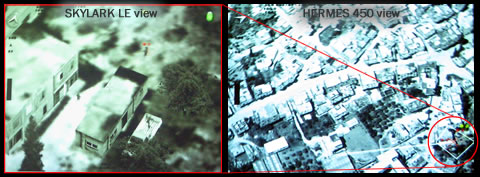

As the mission unfolds, images from synthetic aperture radar (SAR), high and low altitude electro-optic (EO), as well as land observations, COMINT and SIGINT are displayed over a wide-area interactive scene, displaying the situational picture, as well as detailed views of the target, showing different views of the same target as seen by various sensors. Such presentation offers the mission commander capability to assess alternative scenarios of every view, avoiding tactical mistakes resulting in missed opportunities, or risk of inflicting collateral damage or fratricide.

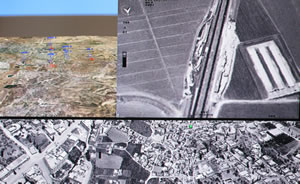

The IMS is improving the flow of intelligence to and from the forward combat elements, streaming clear and detailed mission orders, dispatched from the user and mission commander, directly to the UAS operators, while video intelligence is displayed simultaneously on the mission-station screens and at the management center, in both two and three-dimensions. All data received from the UAS can be used by the ground forces’ C4I systems.

Above: One of two displays of the IMS, showing a composite view of different sensors (bottom line), teh main sensor being watched (upper right) and the situational picture with (upper left).

Below: the fused situational picture superimposed on an aerial image, with multiple sensor views embedded into the ‘footprints’ of each sensor, and targets and assets taking part in the mission depicted in red and blue.